Why machine learning is unavoidable?

Table of Contents

- What is Machine Learning?

- The Machine Learning Approach

- Some examples of tasks best solved by learning

- A standard example of machine learning

- It is very hard to say what makes a 2

- Beyond MNIST: The ImageNet task

- Some examples from an earlier version of the net

- It can deal with a wide range of objects

- It makes some really cool errors

- The Speech Recognition Task

- Phone recognition on the TIMIT benchmark

- Word error rates from MSR, IBM, & Google

- Transcript

- What are neural networks?

- Some simple models of neurons

Why do we need machine learning?

What is Machine Learning?

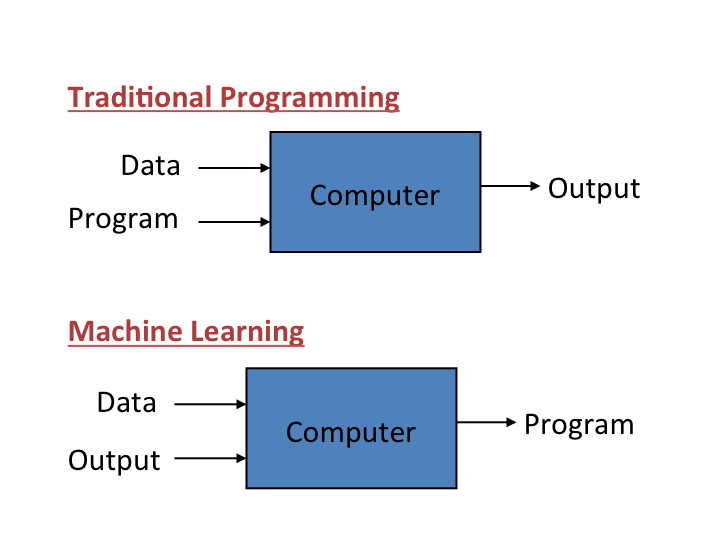

Figure 1: ML distinction

It is very hard to write programs that solve problems like recognizing a three-dimensional object from a novel viewpoint in new lighting conditions in a cluttered scene.

- We don’t know what program to write because we don’t know how its done in our brain.

- Even if we had a good idea about how to do it, the program might be horrendously complicated.

It is hard to write a program to compute the probability that a credit card transaction is fraudulent.

- There may not be any rules that are both simple and reliable. We need to combine a very large number of weak rules.

- Fraud is a moving target. The program needs to keep changing.

The Machine Learning Approach

Instead of writing a program by hand for each specific task, we collect lots of examples that specify the correct output for a given input. A machine learning algorithm then takes these examples and produces a program that does the job.

- The program produced by the learning algorithm may look very different from a typical hand-written program. It may contain millions of numbers.

- If we do it right, the program works for new cases as well as the ones we trained it on.

- If the data changes the program can change too by training on the new data.

Massive amounts of computation are now cheaper than paying someone to write a task-specific program.

Some examples of tasks best solved by learning

Recognizing patterns:

- Objects in real scenes

- Facial identities or facial expressions

- Spoken words

Recognizing anomalies:

- Unusual sequences of credit card transactions

- Unusual patterns of sensor readings in a nuclear power plant

Prediction:

- Future stock prices or currency exchange rates

- Which movies will a person like?

A standard example of machine learning

A lot of genetics is done on fruit flies.

- They are convenient because they breed fast.

- We already know a lot about them.

The MNIST database of hand-written digits is the the machine learning equivalent of fruit flies.

- They are publicly available and we can learn them quite fast in a moderate-sized neural net.

- We know a huge amount about how well various machine learning methods do on MNIST.

We will use MNIST as our standard task.

It is very hard to say what makes a 2

Beyond MNIST: The ImageNet task

1000 different object classes in 1.3 million high-resolution training images from the web.

- Best system in 2010 competition got 47% error for its first choice and 25% error for its top 5 choices.

Jitendra Malik (an eminent neural net sceptic) said that this competition is a good test of whether deep neural networks work well for object recognition.

- A very deep neural net (Krizhevsky et. al. 2012) gets less that 40% error for its first choice and less than 20% for its top 5 choices (see lecture 5).

Some examples from an earlier version of the net

It can deal with a wide range of objects

It makes some really cool errors

The Speech Recognition Task

A speech recognition system has several stages:

- Pre-processing: Convert the sound wave into a vector of acoustic coefficients. Extract a new vector about every 10 mille seconds.

- The acoustic model: Use a few adjacent vectors of acoustic coefficients to place bets on which part of which phoneme is being spoken.

- Decoding: Find the sequence of bets that does the best job of fitting the acoustic data and also fitting a model of the kinds of things people say.

Deep neural networks pioneered by George Dahl and Abdel-rahman Mohamed are now replacing the previous machine learning method for the acoustic model.

Phone recognition on the TIMIT benchmark

(Mohamed, Dahl, & Hinton, 2012) 183 HMM-state labels not pre-trained 2000 logistic hidden units 5 more layers of pre-trained weights 2000 logistic hidden units 2000 logistic hidden units 15 frames of 40 filterbank outputs

- their temporal derivatives

- After standard post-processing using a bi-phone model, a deep net with 8 layers gets 20.7% error rate.

- The best previous speaker- independent result on TIMIT was 24.4% and this required averaging several models.

- Li Deng (at MSR) realised that this result could change the way speech recognition was done.

Word error rates from MSR, IBM, & Google

(Hinton et. al. IEEE Signal Processing Magazine, Nov 2012)

The task Hours of training data Deep neural network Gaussian Mixture Model GMM with more data Switchboard (Microsoft Research) 309 18.5% 27.4% 18.6% (2000 hrs) English broadcast news (IBM) 50 17.5% 18.8% Google voice search (android 4.1) 5,870 12.3% (and falling) 16.0% (>>5,870 hrs)

Transcript

Hello. Welcome to the Coursera course on Neural Networks for Machine Learning.

Before we get into the details of neural network learning algorithms, I want to talk a little bit about machine learning, why we need machine learning, the kinds of things we use it for, and show you some examples of what it can do. So the reason we need machine learning is that the sum problem, where it's very hard to write the programs, recognizing a three dimensional object for example. When it's from a novel viewpoint and new lighting additions in a cluttered scene is very hard to do. We don't know what program to write because we don't know how it's done in our brain. And even if we did know what program to write, it might be that it was a horrendously complicated program. Another example is, detecting a fraudulent credit card transaction, where there may not be any nice, simple rules that will tell you it's fraudulent.

You really need to combine, a very large number of, not very reliable rules. And also, those rules change every time because people change the tricks they use for fraud. So, we need a complicated program that combines unreliable rules, and that we can change easily. The machine learning approach, is to say, instead of writing each program by hand for each specific task, for particular task, we collect a lot of examples, and specify the correct output for given input. A machine learning algorithm then takes these examples and produces a program that does the job. The program produced by the linear algorithm may look very different from the typical handwritten program. For example, it might contain millions of numbers about how you weight different kinds of evidence. If we do it right, the program should work for new cases just as well as the ones it's trained on. And if the data changes, we should be able to change the program runs very easily by retraining it on the new data. And now massive amounts for computation are cheaper that paying someone to write a program for a specific task, so we can afford big complicated machine learning programs to produce these stark task specific systems for us.

Some examples of the things that are best done by using a learning algorithm are recognizing patterns, so for example objects in real scenes, or the identities or expressions of people's faces, or spoken words. There's also recognizing anomalies. So, an unusual sequence of credit card transactions would be an anomaly. Another example of an anomaly would be an unusual pattern of sensor readings in a nuclear power plant. And you wouldn't really want to have to deal with those by doing supervised learning. Where you look at the ones that blow up, and see what, what caused them to blow up. You'd really like to recognize that something funny is happening without having any supervision signal. It's just not behaving in its normal way. And then this prediction. So, typically, predicting future stock prices or currency exchange rates or predicting which movies a person will like from knowing which other movies they like. And which movies a lot of other people liked.

So in this course I'm mean as a standard example for explaining a lot of the machine learning algorithms. This is done in a lot of science. In genetics for example, a lot of genetics is done on fruitflies. And the reason is they're convenient. They breed fast and a lot is already known about the genetics of fruit flies. The MNIST database of handwritten digits is the machine equivalent of fruitflies. It's publicly available. We can get machine learning algorithms to learn how to recognize these handwritten digits quite quickly, so it's easy to try lots of variations. And we know huge amounts about how well different machine learning methods do on MNIST. And in particular, the different machine learning methods were implemented by people who believed in them, so we can rely on those results. So for all those reasons, we're gonna use MNIST as our standard task.

Here's an example of some of the digits in MNIST. These are ones that were correctly recognized by neural net the first time it saw them. But the ones within the neural net wasn't very confident. And you could see why. I've arranged these digits in standard scan line order. So zeros, then ones, then twos and so on. If you look at a bunch of tubes like the ounces in the green rectangle. You can see that if you knew they were 100 in digit you'd probably guess they were twos. But it's very hard to say what it is that makes them twos. There is nothing simple that they all have in common. In particular if you try and overlay one on another you'll see it doesn't fit. And even if you skew it a bit, it's very hard to make them overlay on each other.

So a template isn't going to do the job. An in particular template is going to be very hard to find that will fit those twos in the green box and would also fit the things in the red boxes. So that's one thing that makes recognizing handwritten digits a good task for machine learning.

Now, I don't want you to think that's the only thing we can do. It's a relatively simple for our machine learning system to do now. And to motivate the rest of the course, I want to show you some examples of much more difficult things. So we now have neural nets with approaching a hundred million parameters in them, that can recognize a thousand different object classes in 1.3 million high resolution training images got from the web. So, there was a competition in 2010, and the best system got 47 percent error rate if you look at its first choice, and 25 percent error rate if you say it got it right if it was in its top five choices, which isn't bad for 1,000 different objects.

Jitendra Malik who's an eminent neural net skeptic, and a leading computer vision researcher, has said that this competition is a good test of whether deep neural networks can work well for object recognition. And a very deep neural network can now do considerably better than the thing that won the competition. It can get less than 40 percent error, for its first choice, and less than twenty percent error for its top five choices. I'll describe that in much more detail in lecture five.

Here's some examples of the kinds of images you have to recognize. These images from the test set that he's never seen before. And below the examples, I'm showing you what the neural net thought the right answer was. Where the length of the horizontal bar is how confident it was, and the correct answer is in red. So if you look in the middle, it correctly identified that as a snow plow. But you can see that its other choices are fairly sensible. It does look a little bit like a drilling platform. And if you look at its third choice, a lifeboat, it actually looks very like a lifeboat. You can see the flag on the front of the boat and the bridge of the boat and the flag at the back, and the high surf in the background. So its, its errors tell you a lot about how it's doing it and they're very plausible errors. If you look on the left, it gets it wrong possibly because the beak of the bird is missing and cuz the feathers of the bird look very like the wet fur of an otter. But it gets it in its top five, and it does better than me. I wouldn't know if that was a quail or a ruffed grouse or a partridge. If you look on the right, it gets it completely wrong. It a guillotine, you can why it says that. You can possibly see why it says orangutan, because of the sort of jungle looking background and something orange in the middle. But it fails to get the right answer.

It can, however, deal with a wide range of different objects. If you look on the left, I would have said microwave as my first answer. The labels aren't very systematic. So actually, the correct answer there is electric range. And it does get it in its top five. In the middle, it's getting a turnstile, which is a distributed object. It does, can't, it can do more than just recognize compact things. And it can also deal with pictures, as well as real scenes, like the bulletproof vest. And it makes some very cool errors. If you look at the image on the left, that's an earphone. It doesn't get anything, like an earphone. But if you look at this fourth batch, it thinks it's an ant. And for you to think that's crazy. But then if you look at it carefully, you can see it's a view of an ant from underneath. The eyes are looking down at you, and you can see the antennae behind it. It's not the kind of view of an ant you'd like to have if you were a green fly. If you look at the one on the right, it doesn't get the right answer. But all of its answers are, cylindrical objects.

Another task that neural nets are now very good at, is speech recognition. Or at least part of a speech recognition system. So speech recognition systems have several stages. First they pre-process the sound wave, to get a vector of acoustic coefficients, for each ten milliseconds of sound wave. And so they get 100 of those actors per second. They then take a few adjacent vectors of acoustic coefficients, and they need to place bets on which part of which phoneme is being spoken. So they look at this little window and they say, in the middle of this window, what do I think the phoneme is, and which part of the phoneme is it? And a good speech recognition system will have many alternative models for a phoneme. And each model, it might have three different parts. So it might have many thousands of alternative fragments that it thinks this might be. And you have to place bets on all those thousands of alternatives. And then once you place those bets you have a decoding stage that does the best job it can of using plausible bets, but piecing them together into a sequence of bets that corresponds to the kinds of things that people say.

Currently, deep neural networks pioneered by George Dahl and Abdel-Rahman Mohammed of the University of Toronto are doing better than previous machine learning methods for the acoustic model, and they're now beginning to be used in practical systems. So, Dahl and Mohammed, developed a system, that uses many layers of, binary neurons, to, take some acoustic frames, and make bets about the labels. They were doing it on a fairly small database and then used 183 alternative labels. And to get their system to work well, they did some pre-training, which will be described in the second half of the course. After standard post processing, they got 20.7 percent error rate on a very standard benchmark, which is kind of like the NMIST for speech. The best previous result on that benchmark for speak independent recognition was 24.4%. And a very experienced speech researcher at Microsoft research realized that, that was a big enough improvement, that probably this would change the way speech recognition systems were done.

And indeed, it has. So, if you look at recent results from several different leading speech groups, Microsoft showed that this kind of deep neural network, when used as the acoustic model in the speech system. Reduced the error rate from 27.4 percent to 18.5%, or alternatively, you could view it as reducing the amount of training data you needed from 2,000 hours down to 309 hours to get comparable performance. IBM which has the best system for one of the standard speech recognition tasks for large recovery speech recognition, showed that even it's very highly tuned system that was getting 18.8 percent can be beaten by one of these deep neural networks. And Google, fairly recently, trained a deep neural network on a large amount of speech, 5,800 hours. That was still much less than they trained their mixture model on. But even with much less data, it did a lot better than the technology they had before. So it reduced the error rate from sixteen percent to 12.3 percent and the error rate is still falling.

And in the latest Android, if you do voice search, it's using one of these deep neurall networks in order to do very good speech recognition.

What are neural networks?

Reasons to study neural computation

To understand how the brain actually works.

- Its very big and very complicated and made of stuff that dies when you poke it around. So we need to use computer simulations.

To understand a style of parallel computation inspired by neurons and their adaptive connections.

- Very different style from sequential computation.

- Should be good for things that brains are good at (e.g. vision)

- Shoud be bad for things that brains are bad at (e.g. 23 x 71)

To solve practical problems by using novel learning algorithms inspired by the brain (this course)

- Learning algorithms can be very useful even if they are not how the

brain actually works.

A typical cortical neuron

Gross physical structure:

- There is one axon that branches

- There is a dendritic tree that collects input from other neurons.

Axons typically contact dendritic trees at synapses

- A spike of activity in the axon causes charge to be injected into the post-synaptic neuron.

Spike generation:

- There is an axon hillock that generates outgoing spikes whenever enough charge has flowed in at synapses to depolarize the cell membrane.

axon body axon hillock dendritic tree

Synapses

When a spike of activity travels along an axon and arrives at a synapse it causes vesicles of transmitter chemical to be released.

- There are several kinds of transmitter.

The transmitter molecules diffuse across the synaptic cleft and bind to receptor molecules in the membrane of the post-synaptic neuron thus changing their shape.

- This opens up holes that allow specific ions in or out.

How synapses adapt

The effectiveness of the synapse can be changed:

- vary the number of vesicles of transmitter.

- vary the number of receptor molecules.

Synapses are slow, but they have advantages over RAM

- They are very small and very low-power.

- They adapt using locally available signals

- But what rules do they use to decide how to change?

How the brain works on one slide!

Each neuron receives inputs from other neurons

- A few neurons also connect to receptors.

- Cortical neurons use spikes to communicate.

The effect of each input line on the neuron is controlled by a synaptic weight

- The weights can be positive or negative.

The synaptic weights adapt so that the whole network learns to perform useful computations

- Recognizing objects, understanding language, making plans,

controlling the body.

You have about 10^11 neurons each with about 10^4 weights.

- A huge number of weights can affect the computation in a very short time. Much better bandwidth than a workstation.

Modularity and the brain

Different bits of the cortex do different things.

- Local damage to the brain has specific effects.

- Specific tasks increase the blood flow to specific regions.

But cortex looks pretty much the same all over.

- Early brain damage makes functions relocate.

Cortex is made of general purpose stuff that has the ability to turn into special purpose hardware in response to experience.

- This gives rapid parallel computation plus flexibility.

- Conventional computers get flexibility by having stored sequential programs, but this requires very fast central processors to perform long sequential computations.

Transcript

In this video, I'm gonna tell you a little bit about real neurons on the real brain which provide the inspiration for the artificial neural network that we're gonna learn about in this course. In most of the course, we won't talk much about real neurons but I wanted to give you a quick overview of the beginning.

There's several different reasons to study how networks of neurons can compute things. The first is to understand how the brain actually works. You might think we could do that just by experiments on the brain. But it's very big and complicated, and it dies when you poke it around. And so we need to use computer simulations to help us understand what we're discovering in empirical studies. The second is to understand the style of parallel computation, this inspired by the fact that the brain can compute with a big parallel network, a world of relatively slow neurons. If you can understand that style of parallel computation we might be able to make better parallel computers. It's very different from the way computation is done on a conventional serial processor. It should be very good for things that brains are good at like vision, and it should also be bad for things that brains are bad at by multiplying two numbers together. A third reason, which is the relevant one for this course, is to solve practical problems by using novel learning algorithms that were inspired by the brain. These algorithms can be very useful even if they're not actually how the brain works. So in most of this course we won't talk much about how the brain actually works. It's just used as a source of inspiration to tell us the big, parallel networks of neurons can compute very complicated things. I'm gonna talk more in this video though about how the brain actually works. A typical cortical neuron has a gross physical structure that consists of a cell body, and an axon where it sends messages to other neurons, and a denditric tree where it receives messages from other neurons. Where an axon from one neuron contacts a dendritic tree of another neuron, there's a structure called a synapse. And a spike of activity traveling along the axon, causes charge to be injected into the post synaptic neuron at a synapse. A neuron generates spikes when it's received enough charge in its dendritic tree to depolarize a part of the cell body called the axon hillock. And when that gets depolarized, the neuron sends a spike out along its axon. And the spike's just a wave of depolarization that travels along the axon. Synapses themselves have interesting structure. They contain little vesicles of transmitter chemical and when a spike arrives in the axon it causes these vesicles to migrate to the surface and be released into the synaptic cleft. There's several different kinds of transmitter chemical. There's one that implement positive weights and ones that implement negative weights. The transmitter molecules diffuse across the synaptic clef and bind to receptor molecules in the membrane of the post-synaptic neuron, and by binding to these big molecules in the membrane they change their shape, and that creates holes in the membrane. These holes are like specific ions to flow in or out of the post-synaptic neuron and that changes their state of depolarization. Synapses adapt, and that's what most of learning is, changing the effectiveness of a synapse. They can adapt by varying the number of vesicles that get released when a spike arrives. Or by varying the number of receptor molecules that are sensitive to the released transmitter molecules. Synapses are very slow compared with computer memory. But they have a lot of advantages over the random access memory on a computer, they're very small and very low power. And they can adapt. That's the most important property. They use locally available signals to change their strengths, and that's how we learn to perform complicated computations. The issue of course is how do they decide how to change their strength? What is the, what are the rules for how they should adapt. So, all on one slide this is how the brain works. Each neuron receives inputs from other neurons. A few of the neurons receive inputs from the receptors. It's a large number of neurons, but only a small fraction of them. And, the neurons communicate with each other within in the cortex by sending these spikes of activity. The effective in input line on a neuron is controlled by synaptic weight, which can be positive or negative. And these synaptic weights adapt. And by adapting these weights the whole network learns to perform different kinds of computation. For example recognizing objects, understanding language, making plans, controlling the movements of your body. You have about ten to the eleven neurons, each of which has about ten to the four weights. So you probably ten to the fifteen or maybe only about ten to the fourteen synaptic weights. And a huge number of these weights, quite a large fraction of them, can affect the ongoing computation in a very small fraction of a second, in a few milliseconds. That's much better bandwidth to stored knowledge than even a modern workstation has. One final point about the brain is that the cortex is modular, at least it learns to be modular. Different bits of the cotex end up doing different things. Genetically, the inputs from the senses go to different bits of the cortex. And that determines a lot about what they end up doing. If you damage the brain of an adult, local damage to the brain causes specific effects. Damage to one place might cause you to lose your ability to understand language. Damage to another place might cause you to lose your ability to recognize objects. We know a lot about how functions are located in the brain because when you use a part of the brain for doing something it requires energy, and so it demands more blood flow, and you can see the blood flow in a brain scanner. That allows you to see which bits of the brain you're using for particular tasks. But the remarkable thing about cortex is it looks pretty much the same all over, and that strongly suggests that it's got a fairly flexible universal learning algorithm in it. That's also suggested by the fact that if you damage the brain early on, functions will relocate to other parts of the brain. So it's not genetically predetermined, at least not directly, which part of the brain will perform which function. There's convincing experiments on baby ferrets that show that if you cut off the input to the auditory cortex that comes from the ears, and instead, reroute the visual input to auditory cortex, then the auditory cortex that was destined to deal with sounds will actually learn to deal with visual input, and create neurons that look very like the neurons in the visual system. This suggest the cortex is made of general purpose stuff that has the ability to turn into special purpose hardware for particular tasks in response to experience. And that gives you a nice combination of, rapid parallel computation once you have learnt, plus flexibility, so you can put, you can learn new functions, so you are learning, to do the parallel computation. Its quiet like a FPGA, where you build standard parallel hardware, then after its built, you put in information that tells it what particular parallel computation to do. Conventional computers get their flexibility by having a stored sequential program. But this required very fast central processors to access the lines in the sequential program and perform long sequential computations.

Some simple models of neurons

Idealized neurons

• • To model things we have to idealize them (e.g. atoms)

- Idealization removes complicated details that are not essential

for understanding the main principles.

- It allows us to apply mathematics and to make analogies to

other, familiar systems.

- Once we understand the basic principles, its easy to add

complexity to make the model more faithful. It is often worth understanding models that are known to be wrong (but we must not forget that they are wrong!)

- E.g. neurons that communicate real values rather than discrete

spikes of activity.Linear neurons These are simple but computationally limited

- If we can make them learn we may get insight into more

complicated neurons. i th input bias y = b + ∑ x i w i output i index over input connections weight on i th inputLinear neurons These are simple but computationally limited

- If we can make them learn we may get insight into more

complicated neurons. y = b + ∑ x i w i i y 0 0 b + ∑ x i w i iBinary threshold neurons 1 McCulloch-Pitts (1943): influenced Von Neumann.

- First compute a weighted sum of the inputs.

- Then send out a fixed size spike of activity if

the weighted sum exceeds a threshold.

- McCulloch and Pitts thought that each spike

is like the truth value of a proposition and each neuron combines truth values to compute the truth value of another proposition! 0 threshold weighted inputBinary threshold neurons There are two equivalent ways to write the equations for a binary threshold neuron: z = b + ∑ x i w i z = ∑ x i w i i y = 1 if z ≥ θ 0 otherwise i θ = −b y = 1 if z ≥0 0 otherwiseRectified Linear Neurons (sometimes called linear threshold neurons) They compute a linear weighted sum of their inputs. The output is a non-linear function of the total input. z = b + ∑ x i w i i z if z >0 y = 0 otherwise y 0 zSigmoid neurons These give a real-valued output that is a smooth and bounded function of their total input.

- Typically they use the

logistic function

- They have nice

derivatives which make learning easy (see lecture 3). z = b + ∑ x i w i y = i 1 −z 1 + e 1 y 0.5 0 0 zStochastic binary neurons These use the same equations as logistic units.

- But they treat the output of

the logistic as the probability of producing a spike in a short time window. We can do a similar trick for rectified linear units:

- The output is treated as the

Poisson rate for spikes. z = b + ∑ x i w i p(s = 1) = 1 + e i 1 p 0.5 0 1 0 z −zNeural Networks for Machine Learning Lecture 1d A simple example of learning Geoffrey Hinton with Nitish Srivastava Kevin SwerskyA very simple way to recognize handwritten shapes Consider a neural network with two layers of neurons. 0 1 2 3 4 5 6 7 8 9

- neurons in the top layer represent

known shapes.

- neurons in the bottom layer

represent pixel intensities. A pixel gets to vote if it has ink on it.

- Each inked pixel can vote for several

different shapes. The shape that gets the most votes wins.How to display the weights 1 2 3 4 5 6 7 8 9 0 The input image Give each output unit its own “map” of the input image and display the weight coming from each pixel in the location of that pixel in the map. Use a black or white blob with the area representing the magnitude of the weight and the color representing the sign.How to learn the weights 1 2 3 4 5 6 7 8 9 0 The image Show the network an image and increment the weights from active pixels to the correct class. Then decrement the weights from active pixels to whatever class the network guesses.1 2 3 4 5 6 The image 7 8 9 01 2 3 4 5 6 The image 7 8 9 01 2 3 4 5 6 The image 7 8 9 01 2 3 4 5 6 The image 7 8 9 01 2 3 4 5 6 The image 7 8 9 0The learned weights 1 2 3 4 5 6 7 8 9 0 The image The details of the learning algorithm will be explained in future lectures.Why the simple learning algorithm is insufficient A two layer network with a single winner in the top layer is equivalent to having a rigid template for each shape.

- The winner is the template that has the biggest overlap

with the ink. The ways in which hand-written digits vary are much too complicated to be captured by simple template matches of whole shapes.

- To capture all the allowable variations of a digit we need

to learn the features that it is composed of.Examples of handwritten digits that can be recognized correctly the first time they are seenNeural Networks for Machine Learning Lecture 1e Three types of learning Geoffrey Hinton with Nitish Srivastava Kevin SwerskyTypes of learning task Supervised learning

- Learn to predict an output when given an input vector.

Reinforcement learning

- Learn to select an action to maximize payoff.

Unsupervised learning

- Discover a good internal representation of the input.Two types of supervised learning

Each training case consists of an input vector x and a target output t. Regression: The target output is a real number or a whole vector of real numbers.

- The price of a stock in 6 months time.

- The temperature at noon tomorrow.

Classification: The target output is a class label.

- The simplest case is a choice between 1 and 0.

- We can also have multiple alternative labels.How supervised learning typically works

We start by choosing a model-class: y = f (x;W)

- A model-class, f, is a way of using some numerical

parameters, W, to map each input vector, x, into a predicted output y. Learning usually means adjusting the parameters to reduce the discrepancy between the target output, t, on each training case and the actual output, y, produced by the model. 1 2

- For regression, 2 (y − t) is often a sensible measure of the

discrepancy.

- For classification there are other measures that are generally

more sensible (they also work better).Reinforcement learning In reinforcement learning, the output is an action or sequence of actions and the only supervisory signal is an occasional scalar reward.

- The goal in selecting each action is to maximize the expected sum

of the future rewards.

- We usually use a discount factor for delayed rewards so that we

don’t have to look too far into the future. Reinforcement learning is difficult:

- The rewards are typically delayed so its hard to know where we

went wrong (or right).

- A scalar reward does not supply much information.

This course cannot cover everything and reinforcement learning is one of the important topics we will not cover.Unsupervised learning For about 40 years, unsupervised learning was largely ignored by the machine learning community

- Some widely used definitions of machine learning actually excluded it.

- Many researchers thought that clustering was the only form of

unsupervised learning. It is hard to say what the aim of unsupervised learning is.

- One major aim is to create an internal representation of the input that

is useful for subsequent supervised or reinforcement learning.

- You can compute the distance to a surface by using the disparity

between two images. But you don’t want to learn to compute disparities by stubbing your toe thousands of times.Other goals for unsupervised learning It provides a compact, low-dimensional representation of the input.

- High-dimensional inputs typically live on or near a low-

dimensional manifold (or several such manifolds).

- Principal Component Analysis is a widely used linear method

for finding a low-dimensional representation. It provides an economical high-dimensional representation of the input in terms of learned features.

- Binary features are economical.

- So are real-valued features that are nearly all zero.

It finds sensible clusters in the input.

- This is an example of a very sparse code in which only one of

the features is non-zero.

blog comments powered by Disqus